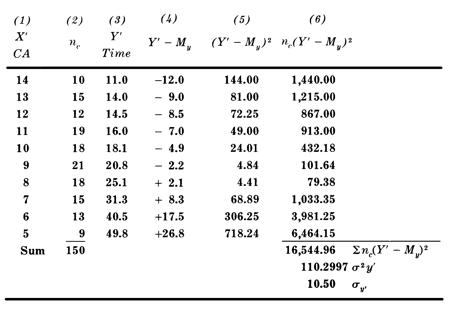

A glance at the Table below will clarify the point I am hoping to make:

Just kidding. Of course many of us had to go through this sort of stuff, in pursuit of some degree in research methodology or experimental psychology – and we did it in a very po-faced and earnest way, taking very seriously the importance of sound research design, hypothesis writing, sample selection, data collection, statistical analysis – all in the hope of validating some theory or in pursuit of rock-solid findings about bed-wetting or aggressive behaviour.

But I have never found any research “findings” which had any relevance for or any effect on a single kid I worked with. Let me explain what I mean by this.

I acknowledge that in a world of outcomes-based program design, and with the need for our programs to satisfy state departments or funding bodies, one must pay homage to some respectable, numerical-sounding data, but fortunately the people who demand so much of this seldom understand much of it themselves. The basic experimental hypothesis might state that kids in x condition who undergo y treatment will demonstrate z results (with df= n-1, p > 0.05 and all other things being equal) and if one plays the game according to these rules one can usually alleviate the anxiety of any higher power.

In my work, every “case” seemed to have the laws of probability stacked against it (or him or her). If we were to believe the epidemiological figures alone, we wouldn’t even admit the next kid who knocks on the doors of our program “nor any of the next hundred. When we look at the sociological data, the poverty level, the family size, the parental marital status, the educational history, the unemployment factor, the dysfunctional interactions, you name them, why on earth would we bother?

Outcomes

But for starters, who agrees on outcomes? In the evocatively illustrated

prospectus our program puts out (like the shingle over our door), we

often advertise that we deal with one or another kind of youth using

these or those methods and that we relieve society of this or that

problem. In reality, the youngster we admit usually doesn’t turn out to

be the kind we had in mind; inevitably we find ourselves using quite

different methods anyway, and sooner or later we realise that in reality

the outcome we are heading towards bears no similarity whatever to the original

plan.

To parody the so-called “medical model”, it is simply never true that Gary, who is referred to us for a drug problem (or any twelve of your other favourite presenting problems), can be treated with a single targeted shot as if he were a measles patient. After our first real engagement with him, whether this happens on the first evening or eight weeks later, we get to feel that in his shoes we probably would also have a drug problem, which in turn might be but a small aspect of the total load he has brought along to be dumped on us.

And it may well happen that at the end of our “treatment”, Gary leaves us with the self-same drug problem – but maybe also with some hard-won new attitudes to himself and/or others which will see him through many hard times ahead. Our hypothesis (you remember, kid x with problem y and treatment z) is rejected out of hand. Experiment fails.

Or does it? The experimental hypothesis with which we started might have been far more optimistic than realistic – and far less than noble. Imagine that it was just to do away with a drug habit. Nothing more. Imagine if we had aimed for nothing more than compliance, or the satisfaction of some or other adults in the kid's world? At what cost might we have succeeded in this? So often our aim is reduced to stopping something which scares us adults, or irritates us, or (dare we think?) something which reminds us of the worst in ourselves. And yes, often we can stop these things. We have the power, whether physical or financial or institutional. But we may not be doing any good with the youngster in whose “best interests” we claim to be doing this work.

Control

Time is also an important factor. On the date when our experiment ends,

we collect together all our scores and do the sums and publish the

results – which may convey some interesting information – for now. But

kids in Child and Youth Care programs are unreliable time-keepers. The

intervention we are using may be spot-on, but will show results only

when the youngster is sixteen (or twenty- six), not six. How many of us

in our work with youth experience developmental timetables in disarray,

working, for example, on infantile attachment issues with mid-teen

kids?

Very hard to conduct with difficult youth are “controlled” studies, which are the heart of scientific enquiries. How easy it would be if we could nail down all the variables except the “experimental” variable! But our field believes in “least intrusive” treatment models; we try to keep as much of young people’s lives intact and continuing while we are working with them. We follow Beedell’s advice to “admit the whole child”, not just the single toublesome pathology and not just the “acceptable” behaviours. We do not freeze kids into laboratory conditions so that we can manipulate particular variables; we work specifically in their life space, that is, where and while they get on with their lives. And anyway, the “referral problems” don’t exist in isolation but coexist along with all of the youngsters' day-by-day functioning and non-functioning, and coping and messing up, their growing and learning and crashing – though all of which we get to know them and to understand them better.

And when we have a sample, not of 200, but just one, there is too much that is moving around and which defies our attempts to get a bead on anything. Assume that our one kid has a chronological age exactly the mean age of a large (the most reliable kind) sample. This means precisely nothing – except perhaps to an astrologer – about our kid. It is certain (better than just probable) that our “subject's” physical development and his cognitive and emotional and intellectual and moral and any other kinds of development are going to be wildly scattered around the equivalent respective means of everyone else in the large sample – especially as we are working, by definition, with a particular youngster in difficulty who has had to be admitted to our program. His peculiar combination of size, shape, attitudes, abilities, fears, hopes, qualities – and orneriness – is utterly unique.

For now. Because then we must think of the day-to-day (and the minute-by-minute) variances. The complex assessment we might have arrived at in that last paragraph is already out of date. He has lived a few moments, and our figures no longer apply. When we think we have him staked out, he is already different. We are reminded how true is our oft-used phrase about practice “in-the- moment”. What we thought we were going to do when we walked in the door vanishes in the twitch of a nerve in our client’s eye, in the flash of a look we are reading up close, in a word or expression which changes everything – and we must act in the now.

Interventions

More. I don’t believe that one can nail down interventions as discrete

variables in any study. You tell me that you are running a behaviour

modification (BM) model, and I will tell you that you are running as

many BM models as there are staff members – multiplied by as many are

there are kids. (I pick BM because it can be the most rigorously defined

and controlled intervention.) But whatever approach you may use, there

is no accounting for the tone of voice and the smiles or frowns of the

staff, all subsumed by the attitudes and dispositions of the staff, not

to mention the various ways in which the youth perceive and experience

the staff.

There are also those unanticipated and sometimes major phase shifts which take us by surprise when we feel that we have got to know to someone. We forget the vulnerability of this kid or the short fuse of another, and one unplanned word or uncontrollable event plunges a kid into rage or despair. Hey, this wasn’t in the list of contents! But we realise that no kid got into our program without a whole sackful of experiences in the past which can be potentially triggered by random words or actions today. We have worked so hard, thought that we were on the right path and were doing everything right – and wham! We're back to square one. The unseen memories or horrors in their histories somehow got past our list of variables when we set this experiment up. Three weeks' work seems to be lost.

The phase shifts are, of course, not only negative. Sometimes things move sideways: a youth has an experience of achievement or learning or insight when he realises that he has strengths and resources within himself, and he no longer needs us. And here we were, violins playing in our heads, and thinking that we had succeeded in building a significant and meaningful relationship with him, when he ups and manages things very nicely for himself thank you. It is enough for us to recognise that in our creation of a healthy climate in our program, a climate of helpful information and trustworthiness and sensible resources, we have made it possible for a youngster to pull himself up by his own bootlaces and reclaim control over his own life.

And just as often, the unanticipated phase shifts are dramatically positive. After long periods of challenge and defiance and clashes of wills, when the “experiment” has seemed perilously in danger of collapse and you have wondered why you put yourselves through all this, a hard and uncompromising eye glistens with a tear of comprehension and relief; you are steeling yourself for a strike of anger and an arm goes round your neck and you find yourself tentatively receiving a hug of appreciation and acceptance – or some other more realistic variant of that scenario. The experiment is merrily in tatters. The statistical indications are off the radar screen of our initial hypothesis, and suddenly things are different.

If there is such a thing as Child and Youth Care research it is probably predicated on the well-known paradigm of science: understanding, prediction, control: we seek to understand a specific phenomenon, to work out what makes it tick “so that we can predict what might happen under similar circumstances in the future – so that we can gain control over the occurrence of the phenomenon for whatever reason. But at the coal-face of Child and Youth Care practice our paradigm heads off in an altogether different direction: we choose to exchange probabilities for possibilities. With individual kids, the only hypothesis which we really have to work with is that in spite of the epidemiology and the statistical “findings” about troubled youth (plural), we believe that with this youth (singular) we can participate in encounters and discoveries and new expectations which will lead who-knows-where. Instead of going down the dead-ends of reductionism (where we would find more and more “accuracy") we choose the wider expansionist view which questions our certainties and presents us with more complexity.

Forever different

A program I worked with took very seriously this belief in “the power of

one” and its need to embody responsiveness to the individual – in order

to counter

the potential harm of institutionalisation. It said to the team: because

this new youngster has arrived today, this place will never be the same

again; and to the youth: because you have arrived in this place today,

you will never be the same again. This is the thesis of this article.

When a troubled kid encounters/is encountered by a Child and Youth Care

worker, things will be forever different. At this stage we are not

saying in what way or how much they will be different. With a sample of

one, there is no way to tell. But there cannot be an honest, open,

encounter between two people which comes with a road map; it can only

offer uncertainty and possibility and new beginnings and god-knows-what.

With n = 200 – and, of course, df = (n-1) and p < 0.05 – the scientists

may be able to place their bets, but with n = 1, a sample of one, who

knows?

All this is not to decry the value of scientific research. Clearly we benefit by a better understanding of certain problems and conditions which affect the lives of kids and their families; clearly we are helped by studies which show which approaches and which interventions are more likely to be useful to troubled kids; and of course we are helped by knowing the life circumstances which hinder or help growing children.

But the next individual child or youth or parent you work with – or the next time you work with a child or youth or parent – you are not working with a known population or with a good-sized experimental sample, or even reliable historical data upon which to base your expectations. You are working with a sample of one, and all empirical bets are off.